The Quicker the _________, the More Instructions a Processor Can Carry Out.

We all recollect of the CPU as the "brains" of a computer, but what does that actually mean? What is going on inside with the billions of transistors to make your estimator work? In this four-role mini series we'll be focusing on computer hardware design, covering the ins and outs of what makes a figurer piece of work.

The serial will cover estimator architecture, processor circuit design, VLSI (very-large-calibration integration), chip fabrication, and future trends in computing. If you've e'er been interested in the details of how processors work on the inside, stick effectually because this is what you want to know to go started.

We'll start at a very high level of what a processor does and how the building blocks come together in a operation design. This includes processor cores, the memory hierarchy, co-operative prediction, and more than. First, nosotros need a bones definition of what a CPU does. The simplest caption is that a CPU follows a set of instructions to perform some operation on a set of inputs. For example, this could be reading a value from memory, then calculation it to some other value, and finally storing the result back to memory in a different location. It could also be something more than circuitous like dividing two numbers if the effect of the previous calculation was greater than zero.

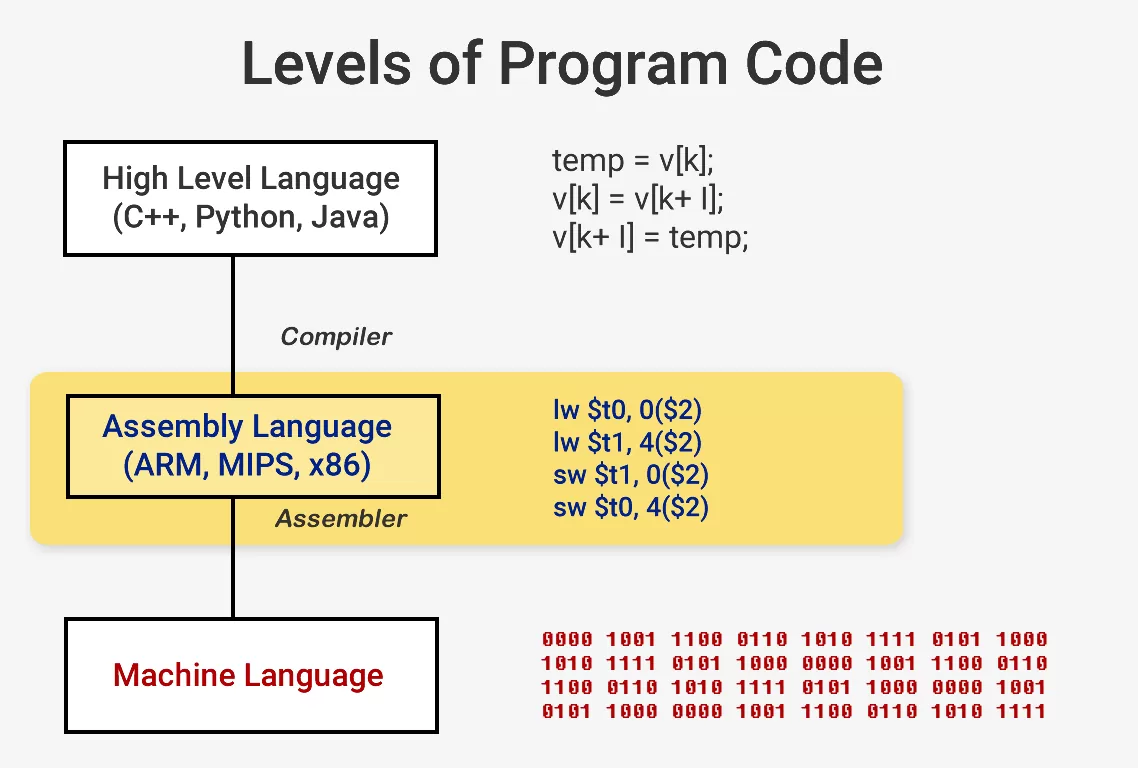

When you want to run a program like an operating system or a game, the program itself is a series of instructions for the CPU to execute. These instructions are loaded from retentivity and on a simple processor, they are executed one by one until the program is finished. While software developers write their programs in high-level languages like C++ or Python, for example, the processor can't understand that. Information technology only understands 1s and 0s and so we need a way to correspond code in this format.

Programs are compiled into a fix of low-level instructions chosen associates language as part of an Teaching Set Architecture (ISA). This is the prepare of instructions that the CPU is built to empathise and execute. Some of the most common ISAs are x86, MIPS, ARM, RISC-Five, and PowerPC. Only like the syntax for writing a part in C++ is different from a function that does the same affair in Python, each ISA has a unlike syntax.

These ISAs can be cleaved up into two master categories: stock-still-length and variable-length. The RISC-Five ISA uses fixed-length instructions which means a certain predefined number of bits in each instruction determine what blazon of didactics information technology is. This is different from x86 which uses variable length instructions. In x86, instructions tin be encoded in different ways and with different numbers of bits for different parts. Because of this complexity, the education decoder in x86 CPUs is typically the nearly complex part of the whole blueprint.

Fixed-length instructions allow for easier decoding due to their regular structure, but limit the number of full instructions that an ISA tin can support. While the common versions of the RISC-5 architecture have about 100 instructions and are open-source, x86 is proprietary and nobody really knows how many instructions there are. People by and large believe at that place are a few m x86 instructions just the verbal number isn't public. Despite differences among the ISAs, they all carry essentially the same cadre functionality.

Now we are ready to turn our calculator on and start running stuff. Execution of an instruction really has several basic parts that are broken down through the many stages of a processor.

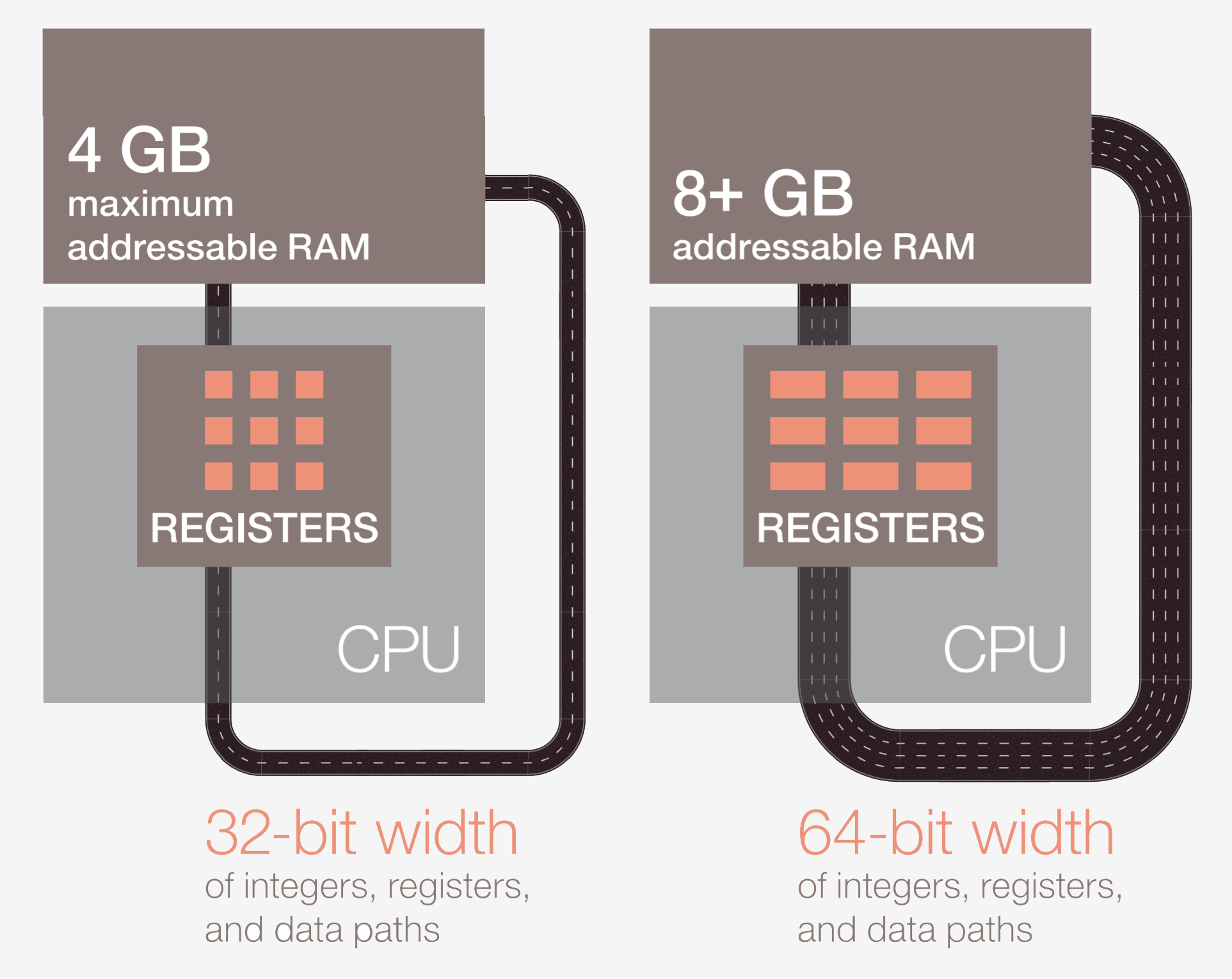

The get-go step is to fetch the didactics from memory into the CPU to begin execution. In the second footstep, the instruction is decoded so the CPU tin can figure out what type of teaching it is. There are many types including arithmetics instructions, branch instructions, and memory instructions. Once the CPU knows what type of educational activity information technology is executing, the operands for the didactics are collected from memory or internal registers in the CPU. If you want to add number A to number B, you can't do the addition until you actually know the values of A and B. Most modern processors are 64-fleck which means that the size of each information value is 64 bits.

Later on the CPU has the operands for the pedagogy, it moves to the execute stage where the operation is washed on the input. This could be adding the numbers, performing a logical manipulation on the numbers, or just passing the numbers through without modifying them. After the result is calculated, memory may need to be accessed to shop the event or the CPU could only go on the value in one of its internal registers. Later on the result is stored, the CPU will update the country of various elements and movement on to the next instruction.

This description is, of course, a huge simplification and nearly modern processors will break these few stages up into 20 or more than smaller stages to improve efficiency. That ways that although the processor will commencement and finish several instructions each cycle, it may have 20 or more cycles for any i educational activity to complete from get-go to finish. This model is typically called a pipeline since it takes a while to fill the pipeline and for liquid to become fully through it, but once it's full, yous go a abiding output.

The whole bicycle that an educational activity goes through is a very tightly choreographed process, just not all instructions may finish at the same time. For example, improver is very fast while division or loading from memory may take hundreds of cycles. Rather than stalling the unabridged processor while 1 slow pedagogy finished, nigh mod processors execute out-of-order. That means they will decide which didactics would be the near beneficial to execute at a given fourth dimension and buffer other instructions that aren't ready. If the electric current instruction isn't set yet, the processor may bound frontwards in the code to see if anything else is ready.

In addition to out-of-order execution, typical modern processors utilize what is called a superscalar architecture. This ways that at any once, the processor is executing many instructions at one time in each stage of the pipeine. It may too be waiting on hundreds more to brainstorm their execution. In club to exist able to execute many instructions at in one case, processors will have several copies of each pipeline stage within. If a processor sees that ii instructions are gear up to be executed and in that location is no dependency betwixt them, rather than wait for them to end separately, it volition execute them both at the aforementioned time. I common implementation of this is called Simultaneous Multithreading (SMT), also known as Hyper-Threading. Intel and AMD processors currently support two-way SMT while IBM has developed fries that support upward to eight-way SMT.

To attain this advisedly choreographed execution, a processor has many extra elements in add-on to the basic core. In that location are hundreds of individual modules in a processor that each serve a specific purpose, just nosotros'll just get over the basics. The two biggest and near beneficial are the caches and branch predictor. Additional structures that nosotros won't encompass include things like reorder buffers, register alias tables, and reservation stations.

The purpose of caches can oftentimes be confusing since they store information just like RAM or an SSD. What sets caches apart though is their admission latency and speed. Even though RAM is extremely fast, it is orders of magnitude likewise slow for a CPU. It may take hundreds of cycles for RAM to respond with data and the processor would exist stuck with nothing to practice. If the data isn't in the RAM, information technology can accept tens of thousands of cycles for data on an SSD to be accessed. Without caches, our processors would grind to a halt.

Processors typically have three levels of enshroud that form what is known equally a memory bureaucracy. The L1 cache is the smallest and fastest, the L2 is in the middle, and L3 is the largest and slowest of the caches. Above the caches in the bureaucracy are small registers that store a single data value during computation. These registers are the fastest storage devices in your organization by orders of magnitude. When a compiler transforms high-level programme into associates language, it will determine the best way to utilize these registers.

When the CPU requests data from retentiveness, information technology volition starting time cheque to see if that information is already stored in the L1 cache. If it is, the data tin be quickly accessed in just a few cycles. If it is non present, the CPU will bank check the L2 and afterward search the L3 cache. The caches are implemented in a way that they are by and large transparent to the core. The core will but ask for some data at a specified retentivity address and any level in the hierarchy that has information technology will respond. As we move to subsequent stages in the memory hierarchy, the size and latency typically increase by orders of magnitude. At the stop, if the CPU can't find the data information technology is looking for in any of the caches, merely so will information technology go to the primary memory (RAM).

On a typical processor, each core will accept two L1 caches: 1 for data and one for instructions. The L1 caches are typically around 100 kilobytes total and size may vary depending on the chip and generation. There is also typically an L2 cache for each cadre although it may exist shared between two cores in some architectures. The L2 caches are normally a few hundred kilobytes. Finally, at that place is a unmarried L3 cache that is shared between all the cores and is on the society of tens of megabytes.

When a processor is executing code, the instructions and data values that it uses most often will get cached. This significantly speeds up execution since the processor does not have to constantly go to master retentivity for the data it needs. We volition talk more most how these memory systems are actually implemented in the 2nd and third installment of this series.

Besides caches, one of the other key building blocks of a modernistic processor is an accurate branch predictor. Co-operative instructions are similar to "if" statements for a processor. One ready of instructions will execute if the condition is true and another will execute if the condition is false. For example, you may want to compare two numbers and if they are equal, execute one function, and if they are dissimilar, execute another function. These branch instructions are extremely common and can make upwards roughly 20% of all instructions in a program.

On the surface, these branch instructions may not seem like an issue, but they can actually be very challenging for a processor to get correct. Since at any 1 fourth dimension, the CPU may be in the procedure of executing ten or 20 instructions at once, it is very important to know which instructions to execute. It may take 5 cycles to determine if the current instruction is a co-operative and some other 10 cycles to determine if the status is true. In that time, the processor may have started executing dozens of boosted instructions without even knowing if those were the correct instructions to execute.

To get around this issue, all modern loftier-performance processors employ a technique called speculation. What this means is that the processor will go on track of co-operative instructions and gauge as to whether the branch will exist taken or not. If the prediction is correct, the processor has already started executing subsequent instructions so this provides a functioning gain. If the prediction is wrong, the processor stops execution, removes all wrong instructions that it has started executing, and starts over from the correct betoken.

These co-operative predictors are some of the primeval forms of machine learning since the predictor learns the behavior of the branches equally information technology goes. If it predicts incorrectly too many times, it will begin to learn the correct beliefs. Decades of research into branch prediction techniques have resulted in accuracies greater than 90% in modernistic processors.

While speculation offers immense operation gains since the processor can execute instructions that are ready instead of waiting in line on busy ones, it as well exposes security vulnerabilities. The famous Spectre attack exploits bugs in co-operative prediction and speculation. The assaulter would use specially crafted code to become the processor to speculatively execute code that would leak memory values. Some aspects of speculation have had to exist redesigned to ensure data could not be leaked and this resulted in a slight drib in operation.

The architecture used in modern processors has come a long style in the by few decades. Innovations and clever design have resulted in more performance and a meliorate utilization of the underlying hardware. CPU makers are very secretive most the technologies in their processors though, so information technology's impossible to know exactly what goes on within. With that beingness said, the fundamentals of how computers piece of work are standardized across all processors. Intel may add their secret sauce to boost enshroud hit rates or AMD may add an avant-garde branch predictor, but they both accomplish the aforementioned task.

This showtime-look and overview covered near of the nuts nigh how processors work. In the second part, nosotros'll hash out how the components that become into CPU are designed, cover logic gates, clocking, power management, circuit schematics, and more.

This article was originally published in April 22, 2019. We've slightly revised information technology and bumped it every bit part of our #ThrowbackThursday initiative. Masthead credit: Electronic circuit board close upwards by Raimudas

henleycoundtowned.blogspot.com

Source: https://www.techspot.com/article/1821-how-cpus-are-designed-and-built/

0 Response to "The Quicker the _________, the More Instructions a Processor Can Carry Out."

Post a Comment